Tech

How Google’s TPUs Are Emerging as a Serious Challenger to Nvidia’s AI Dominance

For years, Nvidia has been the undisputed leader in powering artificial intelligence systems. Its GPUs have become the backbone of machine learning, cloud services and the booming generative AI sector. But Google is increasingly positioning its own custom chips, known as TPUs, as a strong alternative. As demand for AI compute surges and supply shortages persist, Google’s hardware is gaining attention for its performance, scalability, and cost efficiency. The shift suggests that Nvidia may finally be facing serious competition in a market it has long controlled.

What Makes TPUs Different

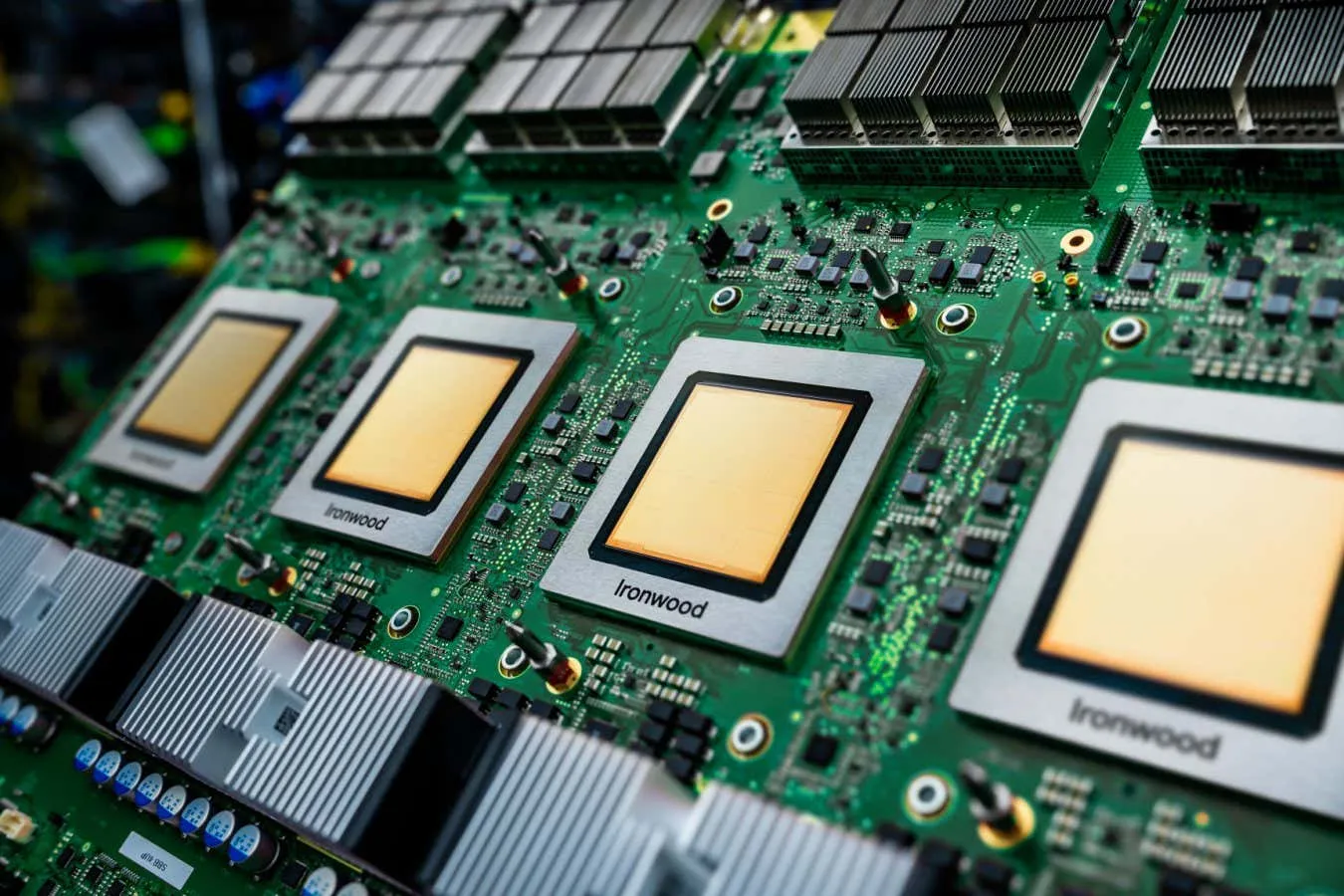

Google’s Tensor Processing Units were originally designed for internal use, powering services such as Search, YouTube, and Gmail. Unlike GPUs, which are general-purpose processors, TPUs are built specifically for machine learning workloads. This allows them to run AI models more efficiently and at lower energy cost. The latest TPU versions offer dramatic improvements in speed and throughput, allowing large models to train faster and run at scale. Engineers say this tailored approach gives TPUs an edge in handling massive neural networks that require huge amounts of parallel processing.

Scalable Chips for an Expanding AI World

As companies rush to adopt AI solutions, demand for computing power has exploded. Nvidia GPUs remain highly desired, but supply bottlenecks and rising costs have pushed developers to explore alternatives. Google has responded by opening access to its most powerful TPUs through Google Cloud. These chips can be linked into large clusters, giving developers supercomputer-level performance without building their own infrastructure. Analysts say this scale-out capability is one of the biggest reasons TPUs are becoming attractive to start-ups and established tech firms alike.

Performance Gains in Real Workloads

Independent tests and industry feedback show that TPUs deliver competitive performance in many AI tasks, especially deep learning training. For some models, they have matched or surpassed comparable Nvidia hardware in speed and efficiency. Developers point out that TPUs are particularly strong at handling large batches of data, which is essential for training modern language models. Google also provides a highly optimised software stack, making it easier for engineers to migrate existing code and integrate TPUs into their workflows.

Cost Efficiency Becomes a Key Advantage

Beyond performance, TPUs are gaining traction because they can be more cost effective. As AI workloads grow, electricity and cooling expenses have become major concerns for both companies and data centers. TPUs are designed to consume less energy per computation, reducing operational costs over time. Google Cloud has also introduced flexible pricing models that allow users to pay only for the compute they need. This is especially appealing for smaller companies struggling with the high price of Nvidia chips amid global shortages and rising demand.

A Growing Ecosystem Around Google’s Hardware

Google is rapidly expanding its AI ecosystem to support broader TPU adoption. It has improved its developer tools, expanded cloud documentation and built partnerships to attract enterprises. The company is also working closely with start ups training state of the art models on TPUs, showcasing success stories that demonstrate real world results. This ecosystem growth is important because developers historically gravitated toward Nvidia due to its mature software tools. As Google continues closing that gap, TPUs are becoming easier and more appealing to integrate.

What This Means for the Future of AI Computing

The rise of Google’s TPUs signals a major shift in the AI hardware landscape. Nvidia remains dominant and continues to innovate, but the presence of a strong competitor is reshaping market dynamics. For AI developers and companies building next generation models, having more hardware options is a clear advantage. It reduces dependency on a single supplier, increases pricing flexibility and encourages faster innovation. Analysts predict that competition between the two giants will accelerate the development of faster, more efficient chips in the years ahead. For now, Google’s TPUs have firmly established themselves as a credible, high performance alternative that is already influencing how AI systems are built and scaled.